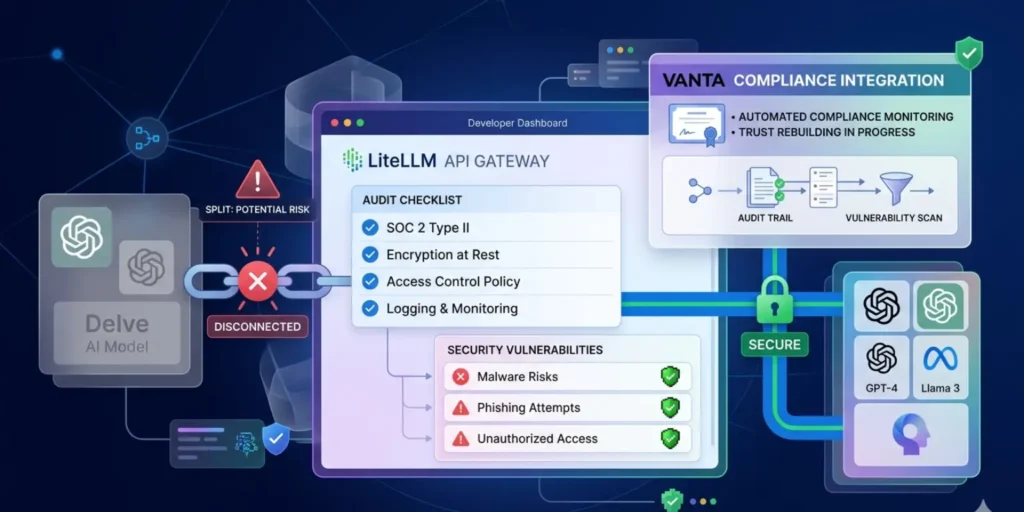

LiteLLM platform interface with broken link to Delve, malware warning symbols, and secure connection to a new compliance provider, highlighting security concerns.

A major shake-up has hit the AI infrastructure space. LiteLLM, a widely used platform that helps developers connect to multiple AI models, has announced it is cutting ties with compliance startup Delve following a week of security concerns and public allegations.

The decision comes after a malware incident and growing doubts about the reliability of Delve’s compliance certifications. For a company used by millions of developers, the move signals a serious effort to rebuild trust.

What LiteLLM Does

LiteLLM is not a typical consumer-facing AI tool. Instead, it acts as a gateway layer for developers, allowing them to connect to different AI models through a single interface.

This makes it an important part of the AI ecosystem. Many companies rely on it to manage requests, control costs, and switch between models without rewriting code.

Because of this central role, security and compliance are critical. Any weakness in LiteLLM’s systems could affect a large number of downstream applications and users.

The Security Incident That Triggered the Fallout

The situation escalated after LiteLLM’s open-source version was hit by credential-stealing malware. This type of attack is especially serious. Instead of just disrupting systems, it aims to collect sensitive data such as:

- API keys

- Developer credentials

- Access tokens

Once stolen, these credentials can be used to access systems, run unauthorized AI queries, or even launch further attacks. For a platform like LiteLLM, which sits between developers and AI models, this creates a high-risk scenario. Even if the attack is limited in scope, it raises concerns about how secure the platform really is.

The Role of Compliance Certifications

Before the incident, LiteLLM had obtained two security compliance certifications through Delve. These certifications are meant to show that a company follows proper procedures to:

- Protect user data

- Detect threats

- Respond to security incidents

- Maintain internal controls

In simple terms, they are supposed to give customers confidence that the platform is safe to use. However, the value of these certifications depends entirely on how trustworthy the auditing process is.

Allegations Against Delve

Trouble began when Delve was accused of misleading customers about the quality of its compliance checks. According to claims that surfaced publicly:

- Some compliance data may have been fabricated or simulated

- Auditors may have approved reports without proper verification

- The overall process may not have met industry standards

These are serious allegations. If true, they would mean that companies relying on Delve’s certifications were given a false sense of security.

Delve’s Response

Delve’s founder has denied all allegations.

The company responded by:

- Offering free re-tests and audits to customers

- Rejecting claims of misconduct

- Attempting to reassure clients about its processes

However, the situation did not settle.

An anonymous whistleblower doubled down on the accusations and reportedly released evidence to support their claims, further intensifying the controversy. This created a trust problem that was difficult for customers to ignore.

LiteLLM’s Decision

In response, LiteLLM moved quickly.

CTO Ishaan Jaffer announced publicly that the company will:

- Stop working with Delve

- Redo its compliance certifications

- Bring in a new compliance provider

- Use an independent third-party auditor

LiteLLM has chosen Vanta as its new compliance partner.

This decision reflects a clear shift toward greater transparency and independence in its security processes.

Why This Matters

This story is not just about one company changing vendors. It highlights several important issues in the fast-growing AI industry.

1. Trust in AI Infrastructure

AI tools are being adopted at a rapid pace. But behind the scenes, they depend on layers of infrastructure like LiteLLM. If those layers are not secure, the risks multiply quickly.

2. The Limits of Compliance Labels

Certifications are often treated as proof of security. But this situation shows that:

- Not all certifications are equal

- The auditing process matters as much as the result

- Companies must verify, not just trust

In other words, a certificate alone does not guarantee safety.

3. Open Source Risks

LiteLLM’s open-source version being targeted also highlights a broader issue.

Open-source software is powerful and flexible, but it can also be:

- Easier to modify and exploit

- Distributed without strict controls

- Dependent on community vigilance

This does not make open source unsafe, but it does mean security must be handled carefully.

A Shift Toward Independent Auditing

One of the most important parts of LiteLLM’s response is its move toward independent third-party auditing.

Instead of relying on a bundled compliance provider, the company is separating:

- Compliance tooling

- Audit verification

This approach reduces the risk of conflicts of interest and increases credibility.

For customers, it provides stronger assurance that:

- Security checks are real

- Findings are unbiased

- Certifications reflect actual practices

Industry-Wide Implications

The fallout from this situation could ripple across the AI and startup ecosystem.

More Scrutiny on Compliance Startups

Companies offering compliance services may face tougher questions about how they operate.

Demand for Transparency

Clients may start asking for:

- Detailed audit reports

- Evidence of testing

- Independent verification

Higher Standards

The industry could move toward stricter expectations for:

- Security practices

- Data handling

- Certification processes

A Difficult Week for LiteLLM

For LiteLLM, the past week has been challenging.

The company faced:

- A malware incident

- Questions about its security posture

- Concerns about its compliance certifications

By acting quickly, it is trying to limit the damage and restore confidence among developers and partners.

What Happens Next

Several key developments will be worth watching:

- How quickly does LiteLLM complete its new certifications

- Whether independent audits confirm strong security practices

- How customers respond to the changes

- Whether further evidence emerges in the Delve controversy

The situation is still evolving, and its long-term impact remains uncertain.

The Bigger Picture

This episode reflects a broader truth about the AI industry. As tools become more powerful and widely used, the stakes around security and trust increase. Startups are moving fast, but processes like compliance, auditing, and verification must keep up.

LiteLLM’s decision to walk away from Delve shows that:

- Trust can be lost quickly

- Transparency is becoming essential

- Companies must be ready to act when concerns arise

Conclusion

LiteLLM’s break from Delve marks an important moment for AI infrastructure and compliance. What began as a security incident has turned into a deeper conversation about how companies prove they are safe and trustworthy. By switching to Vanta and committing to independent audits, LiteLLM is trying to rebuild confidence after a difficult week.

At the same time, the controversy surrounding Delve serves as a reminder that certifications are only as strong as the processes behind them. For developers, businesses, and the wider tech community, the lesson is clear: Security is not just about tools or labels. It depends on real systems, real checks, and real accountability.