“MIT researchers combine generative AI and wireless signals to detect hidden objects and map indoor environments.”

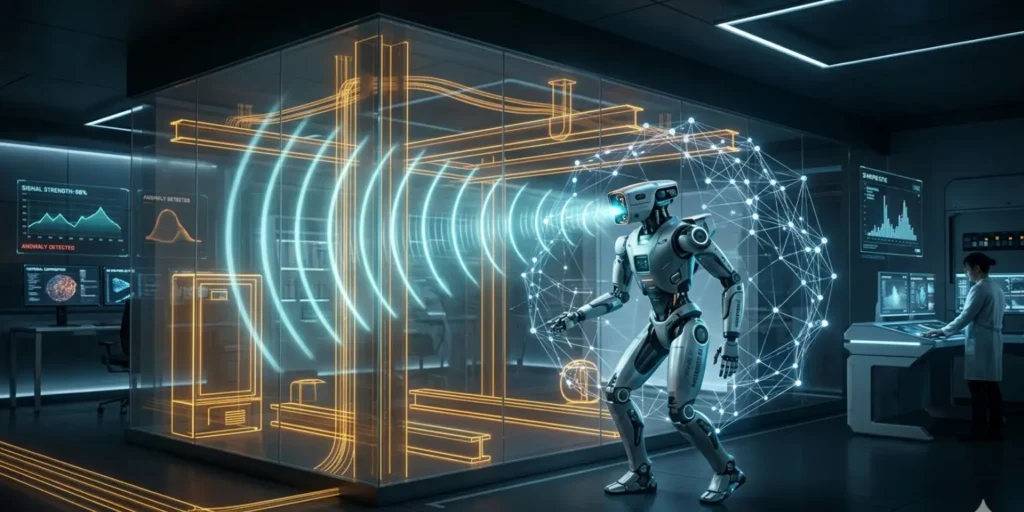

Researchers at Massachusetts Institute of Technology have developed a powerful new way for machines to “see” objects hidden behind walls, furniture, and other obstacles. By combining wireless signals with generative AI, this new system can reconstruct objects and even entire indoor environments with much higher accuracy than before.

This innovation could change how robots interact with the world. It may improve everything from warehouse automation to smart home safety. More importantly, it solves a long-standing technical problem that has limited wireless sensing systems for years.

The Idea Behind Seeing Through Obstacles

For over a decade, researchers have been exploring how wireless signals can help machines detect objects that are not directly visible. These systems rely on signals similar to Wi-Fi that can pass through materials like wood, plastic, drywall, and fabric. When these signals hit an object, they bounce back, carrying information about its shape and position.

Earlier systems used these reflections to create rough 3D images of hidden objects. For example, a robot could detect something like a box or a cup even if it was placed behind a barrier. However, the results were often incomplete. The system could usually see only parts of an object, not the full structure.

This limitation made it difficult for robots to interact with hidden objects. A robot might know something is there, but it would not fully understand its shape, size, or orientation. That made tasks like grasping or moving objects unreliable.

The Role of Generative AI

To solve this problem, researchers introduced generative AI into the process. Instead of relying only on raw signal data, the system now uses AI models to “fill in” the missing parts of an object. Generative AI is already widely used in tools like ChatGPT and Claude, where it creates text, images, or other content based on patterns it has learned. In this case, the AI model learns how objects typically look and uses that knowledge to complete partial reconstructions.

The system first builds a rough shape of a hidden object using wireless reflections. Then, the AI model analyzes that partial shape and predicts what the missing parts should look like. This results in a much more complete and realistic 3D reconstruction. This step marks a major improvement. Instead of simply detecting objects, machines can now better understand them.

Overcoming a Major Technical Challenge

One of the biggest challenges in wireless vision is something called “specular reflection.” This means that wireless signals often bounce off surfaces in only one direction, like light reflecting off a mirror. Because of this, sensors can only capture certain angles of an object. Large portions, such as the sides or bottom, remain invisible. This creates gaps in the reconstructed image.

In the past, researchers tried to solve this using physics-based models. While helpful, these methods had limited accuracy. They could not fully recover the missing details. The new approach uses generative AI to overcome this limitation. Instead of trying to directly measure every surface, the system intelligently predicts what cannot be seen. This allows it to create more complete and useful representations of hidden objects.

Training the AI Without Real Data

Training a generative AI model usually requires large datasets. For example, image-based AI systems are trained on millions of photos. However, there is no large dataset of wireless signal reflections available. To address this, researchers created synthetic data. They took existing image datasets and modified them to behave like wireless reflections. This included adding noise and simulating how signals bounce off surfaces.

By embedding the physics of wireless signals into this synthetic data, they were able to train the AI model effectively. This approach saved years of data collection and made the system practical much sooner. It also shows how AI can adapt knowledge from one domain to another, which is a growing trend in modern machine learning.

Introducing the Wave-Former System

The first system developed using this approach is called Wave-Former. It focuses on reconstructing individual objects that are hidden from view. Wave-Former works in three main steps. First, it collects wireless signal reflections from the environment. Second, it creates a rough estimate of the object’s shape. Third, it uses a generative AI model to complete the missing parts and refine the structure.

The system was tested on about 70 everyday objects, including boxes, cans, fruit, and utensils. These objects were hidden behind different materials such as cardboard, wood, and plastic. The results showed a significant improvement. Wave-Former increased reconstruction accuracy by nearly 20 percent compared to earlier methods. This makes it much more reliable for real-world applications.

Expanding to Full Room Reconstruction

The researchers did not stop at individual objects. They also developed a second system that can reconstruct entire indoor environments. This system uses a single stationary wireless sensor to capture reflections from people moving in a room. As a person moves, the signals bounce around the environment, creating complex patterns.

These patterns include what researchers call “ghost signals.” These are secondary reflections that bounce multiple times before returning to the sensor. While often treated as noise, they actually contain valuable information about the space. By analyzing how these signals change over time, the system can build a rough map of the room. Then, a generative AI model refines this map into a more detailed reconstruction.

The RISE System and Its Performance

This second system is known as RISE. It uses the same combination of wireless sensing and generative AI to understand entire spaces rather than just objects.

RISE was tested using more than 100 human movement patterns. The results were impressive. On average, the system produced reconstructions that were about twice as accurate as previous techniques.

This level of improvement opens up new possibilities. Instead of needing multiple sensors or cameras, a single wireless device can now provide a clear understanding of an indoor environment.

Real-World Applications

The potential uses of this technology are wide-ranging and practical. One key area is warehouse automation. Robots could use this system to check the contents of packages without opening them. This could reduce errors and lower the number of returned items.

In smart homes, the technology could improve safety. For example, a system could detect where a person is in a room without using cameras. This could help robots avoid collisions or assist elderly individuals more effectively.

Another advantage is privacy. Unlike cameras, wireless signals do not capture visual images. This makes the system suitable for environments where privacy is important, such as homes, hospitals, and workplaces. The technology could also be useful in search and rescue operations. Robots equipped with wireless vision could locate people trapped behind debris after disasters.

A Step Toward Smarter Robots

This breakthrough represents a shift in how machines understand their surroundings. Instead of relying only on what they can see, robots can now interpret hidden information using AI and wireless signals.

This makes them more capable and adaptable. Tasks that were once difficult or unreliable can now be performed with greater confidence.

The combination of sensing and AI also reflects a broader trend in robotics. Machines are becoming more intelligent not just because of better hardware, but because of smarter software that can interpret complex data.

Looking Ahead

The researchers plan to continue improving the system. One goal is to increase the level of detail in the reconstructions. Another is to develop larger AI models specifically designed for wireless signals.

These models could work in a similar way to advanced AI systems like Gemini, but focused on interpreting physical environments instead of text or images. Such developments could unlock even more applications, from advanced robotics to security systems and beyond.

Conclusion

The integration of generative AI with wireless sensing marks a major step forward in machine perception. By solving the problem of incomplete data, researchers have created a system that can see beyond obstacles and understand hidden environments.

This technology has the potential to transform industries, improve safety, and make robots more useful in everyday life. It also highlights the growing power of AI to enhance traditional systems and overcome long-standing challenges.

As research continues, wireless vision could become a standard feature in future smart systems, bringing us closer to a world where machines can truly understand the spaces around them—even when those spaces are hidden from view.